CenterPoint: Optimized for Qualcomm Devices

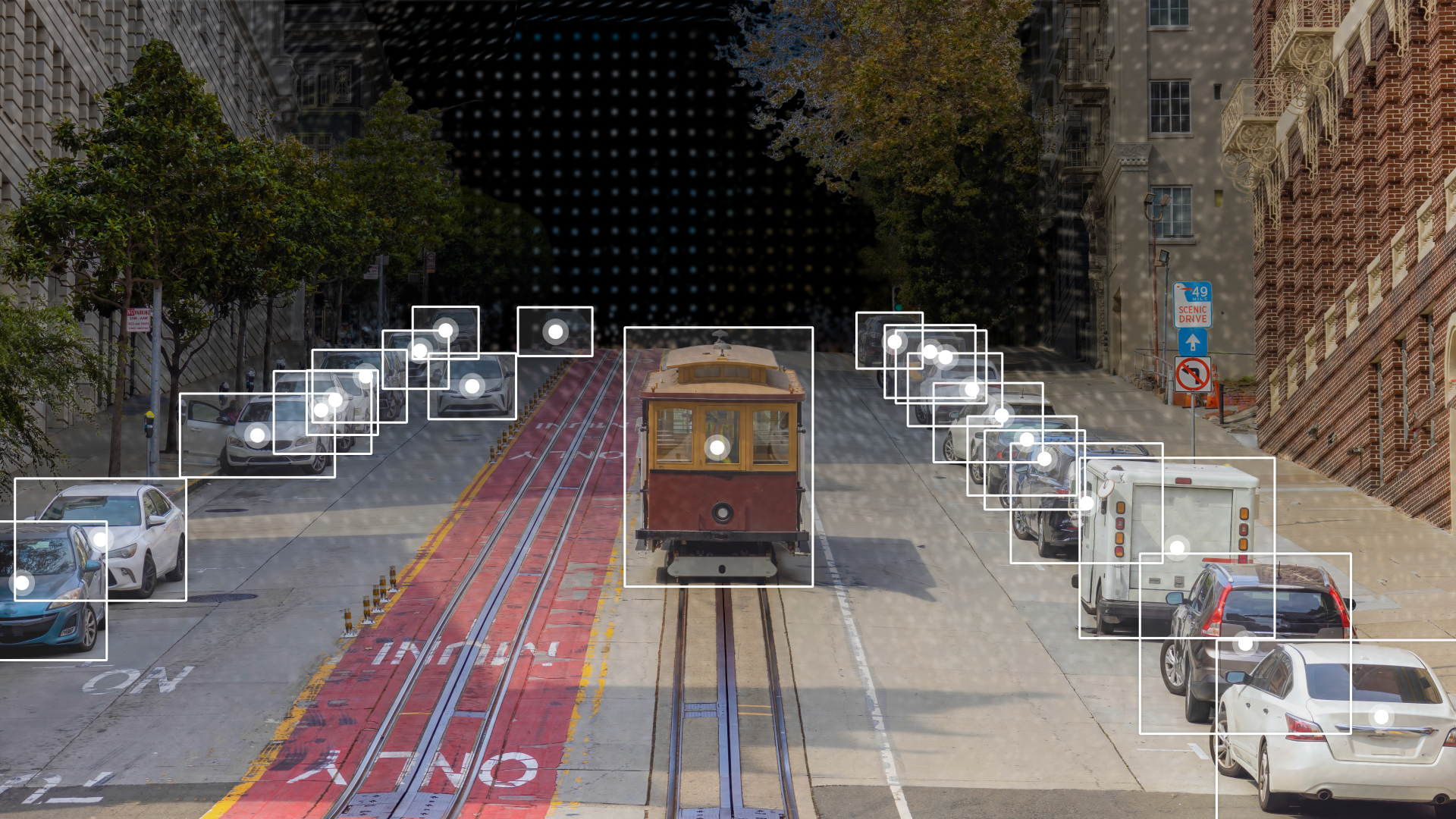

CenterPoint is a LiDAR-based 3D object detection model that detects objects by predicting their centers and regressing other attributes. It is designed for high accuracy and real-time performance in autonomous driving applications.

This repository contains pre-exported model files optimized for Qualcomm® devices. You can use the Qualcomm® AI Hub Models library to export with custom configurations. More details on model performance across various devices, can be found here.

Qualcomm AI Hub Models uses Qualcomm AI Hub Workbench to compile, profile, and evaluate this model. Sign up to run these models on a hosted Qualcomm® device.

Getting Started

There are two ways to deploy this model on your device:

Option 1: Download Pre-Exported Models

Below are pre-exported model assets ready for deployment.

| Runtime | Precision | Chipset | SDK Versions | Download |

|---|---|---|---|---|

| QNN_DLC | float | Universal | QAIRT 2.45 | Download |

| TFLITE | float | Universal | Download |

For more device-specific assets and performance metrics, visit CenterPoint on Qualcomm® AI Hub.

Option 2: Export with Custom Configurations

Use the Qualcomm® AI Hub Models Python library to compile and export the model with your own:

- Custom weights (e.g., fine-tuned checkpoints)

- Custom input shapes

- Target device and runtime configurations

This option is ideal if you need to customize the model beyond the default configuration provided here.

See our repository for CenterPoint on GitHub for usage instructions.

Model Details

Model Type: Model_use_case.driver_assistance

Model Stats:

- Model checkpoint: PointPillars

- Input resolution: 5x20x5, 5x4, 5

- Number of parameters: 21.8M

- Model size: 83.3 MB

Performance Summary

| Model | Runtime | Precision | Chipset | Inference Time (ms) | Peak Memory Range (MB) | Primary Compute Unit |

|---|---|---|---|---|---|---|

| CenterPoint | QNN_DLC | float | Snapdragon® 8 Elite Gen 5 Mobile | 176.658 ms | 2 - 717 MB | NPU |

| CenterPoint | QNN_DLC | float | Snapdragon® X2 Elite | 184.862 ms | 2 - 2 MB | NPU |

| CenterPoint | QNN_DLC | float | Snapdragon® X Elite | 324.739 ms | 2 - 2 MB | NPU |

| CenterPoint | QNN_DLC | float | Snapdragon® 8 Gen 3 Mobile | 249.275 ms | 0 - 751 MB | NPU |

| CenterPoint | QNN_DLC | float | Qualcomm® QCS8275 (Proxy) | 920.055 ms | 0 - 450 MB | NPU |

| CenterPoint | QNN_DLC | float | Qualcomm® QCS8550 (Proxy) | 330.139 ms | 2 - 1294 MB | NPU |

| CenterPoint | QNN_DLC | float | Qualcomm® QCS9075 | 422.489 ms | 2 - 11 MB | NPU |

| CenterPoint | QNN_DLC | float | Qualcomm® QCS8450 (Proxy) | 517.071 ms | 2 - 679 MB | NPU |

| CenterPoint | QNN_DLC | float | Snapdragon® 8 Elite For Galaxy Mobile | 210.248 ms | 0 - 461 MB | NPU |

| CenterPoint | TFLITE | float | Snapdragon® 8 Elite Gen 5 Mobile | 2413.453 ms | 1868 - 1878 MB | CPU |

| CenterPoint | TFLITE | float | Snapdragon® 8 Gen 3 Mobile | 3868.195 ms | 1866 - 1874 MB | CPU |

| CenterPoint | TFLITE | float | Qualcomm® QCS8275 (Proxy) | 6220.268 ms | 1846 - 1855 MB | CPU |

| CenterPoint | TFLITE | float | Qualcomm® QCS8550 (Proxy) | 4701.028 ms | 1833 - 1835 MB | CPU |

| CenterPoint | TFLITE | float | Qualcomm® QCS9075 | 5154.454 ms | 2364 - 2385 MB | CPU |

| CenterPoint | TFLITE | float | Qualcomm® QCS8450 (Proxy) | 5728.45 ms | 1837 - 1847 MB | CPU |

| CenterPoint | TFLITE | float | Snapdragon® 8 Elite For Galaxy Mobile | 2852.211 ms | 1852 - 1861 MB | CPU |

License

- The license for the original implementation of CenterPoint can be found here.

Community

- Join our AI Hub Slack community to collaborate, post questions and learn more about on-device AI.

- For questions or feedback please reach out to us.